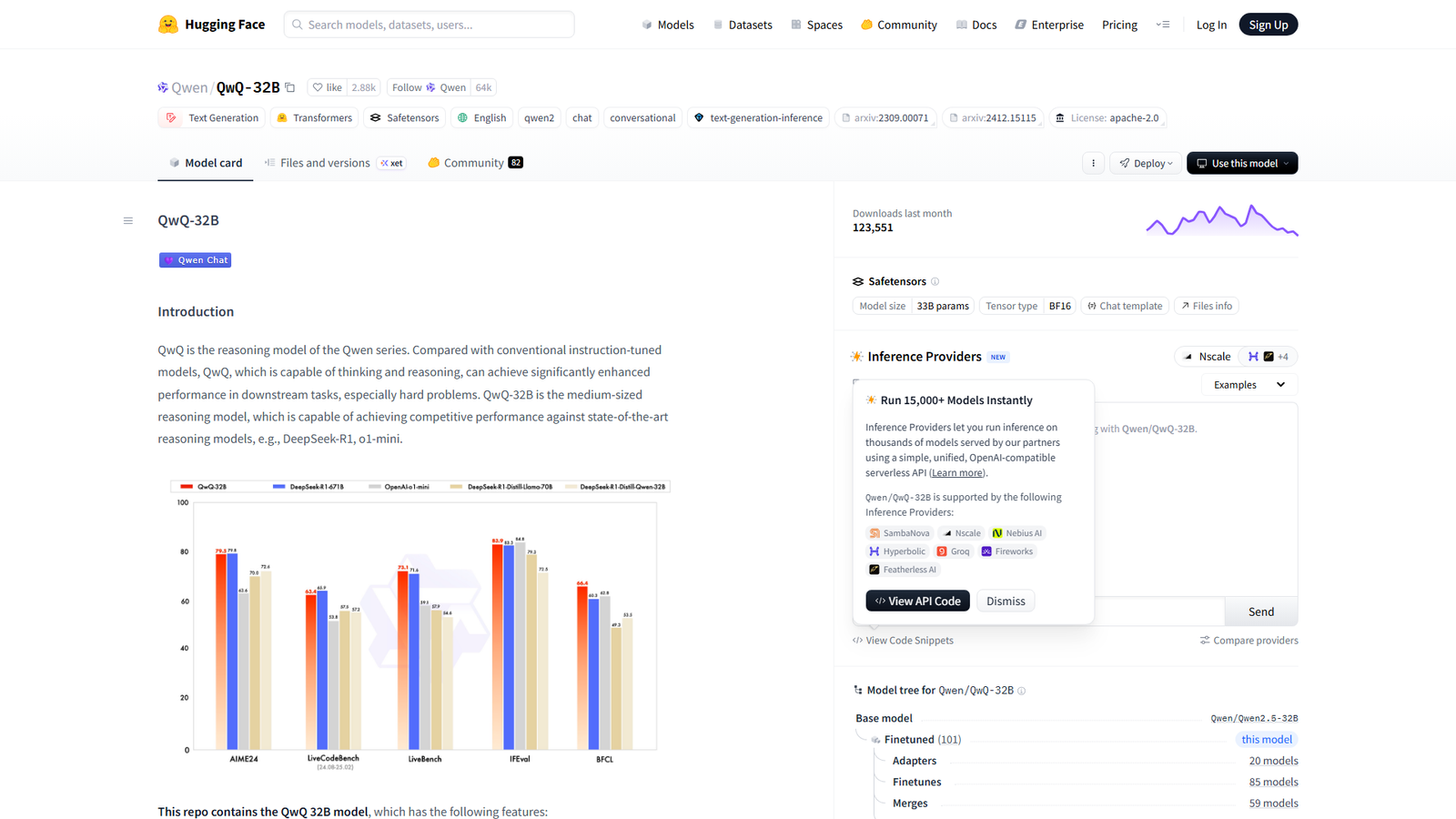

QwQ-32B

Matching R1 reasoning yet 20x smaller

About

QwQ-32B is a medium-sized reasoning model (32.5B parameters) from the Qwen series, designed to significantly enhance performance in complex downstream tasks by incorporating thinking and reasoning capabilities. It is a causal language model built on a transformer architecture with features like RoPE, SwiGLU, and RMSNorm, offering a large context length of 131,072 tokens with support for YaRN for extended inputs. The model is available on Hugging Face, with quickstart guides, usage guidelines, and evaluation benchmarks provided. It aims to compete with state-of-the-art reasoning models like DeepSeek-R1 and o1-mini.

Categories & Tags

Color Palette

Hugging Face Blue

#536af5

White Background

#FFFFFF

Text Black

#000000

Dark Gray Text/Secondary

#333333

Typography

Sans-serif

Headings, Body Text, Navigation

Design Review

Similar Products

Clear for Slack

Clear messages get answered quicker

Griply 2026

Achieve your goals with a goal-oriented task manager

HappyMail

We made email simple again

Blober.io

The easiest way to transfer files between cloud providers.

Supaguard

Scan, Detect & Protect Your Supabase Data

Timelines Time Tracking 4

Track your time to achieve your New Year resolutions.

SoftReveal — Reveal less. Engage more.

Hide Content, Reveal on Click

CalPal

The notebook calculator that thinks for you (now with AI).

Reword

Rewrite messages without leaving your workflow

MoovAI

Launch viral AI ads & pro social content in minutes

Resell AI

Reselling workflow with market-based price suggestions

Qwen-Image-2512

SOTA open-source T2I model with even greater realism